Continuing our series of commentaries on Makin and Orban de Xivry’s article on common Statistical Mistakes, let’s look at #5: Small Samples. (Previous posts: #1-2, #3 , #4.)

This issue is simple but profound, and its prevalence is, I’ll argue, tied to more fundamental problems with how we do science. The mistake: drawing conclusions from studies with small numbers of samples (“small N“). This comes up all the time in one of my own areas of interest, the gut microbiome, the literature on which is filled with studies involving very few subjects, whether animals or people.

Few samples, many problems

The reason small samples are a problem should be obvious: with few samples, our confidence in any estimate must be weak; fluctuations are large for small N. If we flip a fair coin 10 times, the odds of getting 6 heads are about 20%; if we flip it 100 times, the odds of getting 60 heads are about 1%. It would be foolish to conclude from the former case that the coin is unfair. There are related, less obvious problems with small samples. For example, it seems common for people to forget that p-values themselves are random variables, i.e. they are statistical estimates with an uncertainty associated with them; these uncertainties are large when N is small.

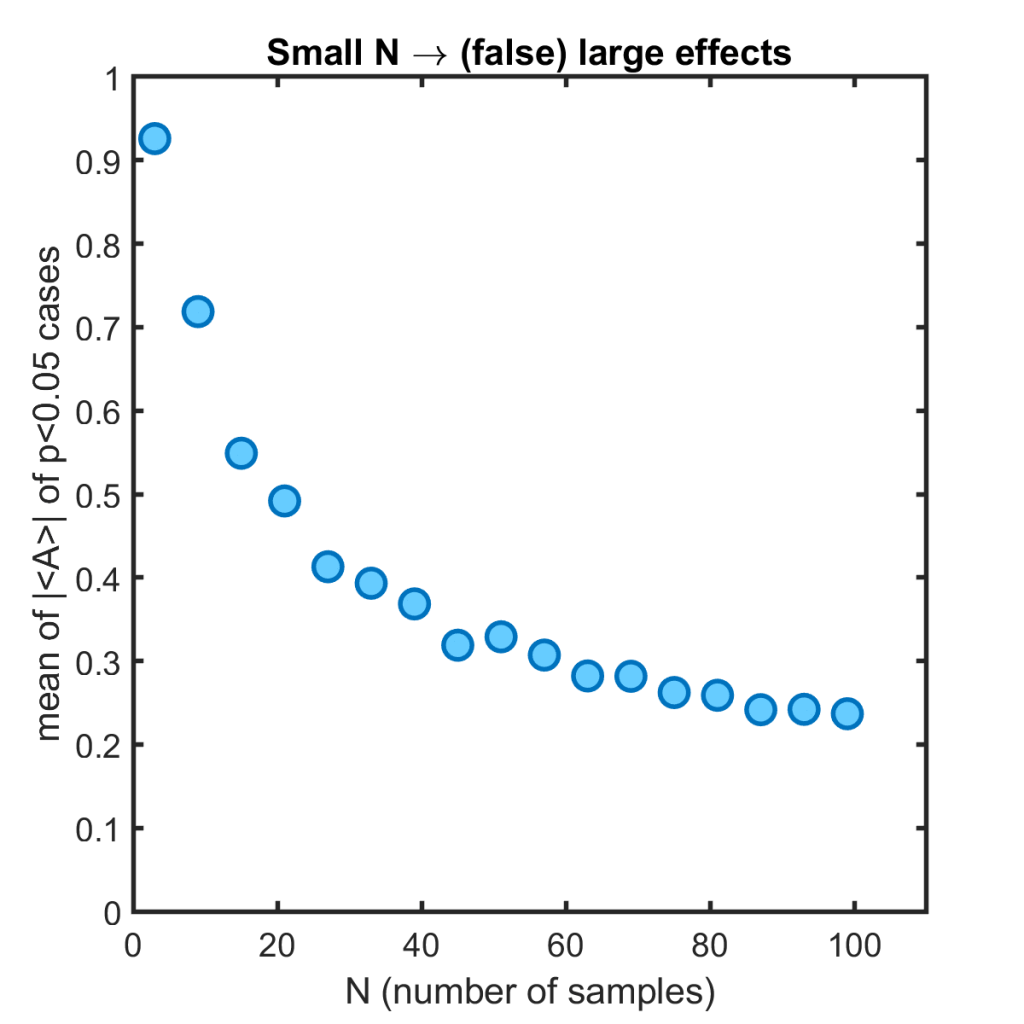

Makin and Orban de Xivry focus on another, also not well appreciated, problem with small samples: false-positive effect sizes are larger than they would be for larger N. They note the significance fallacy: “If the effect size is that big with a small sample, it can only be true.” This is easy to see by writing a simple simulation. Here’s mine, in which we consider a random Gaussian variable A of mean 0 (i.e. zero true effect size) and standard deviation 1, and simulate 1000 instances each at N from 3 to 100. For each instance we calculate a p-value from a t-test, and for those that give p < 0.05 (about 5% of the instances, of course), we calculate the mean of A. We then calculate the average of the absolute value of all these means as a measure of the magnitude of (false) effect size. In other words, if by chance we found p<0.05, here’s the average effect magnitude we’d think we’ve found, as a function of the number of samples. The resulting plot:

We can easily find a large, spurious signal when our datapoints are few.

The solutions

Makin and Orban de Xivry note that a solution to this problem is to calculate what statistical power is possible given one’s sample size. That’s true, but an even better solution is to use more samples in your study.

Everyone knows this. (Well, perhaps not, but let’s be optimistic.) Increasing N is much easier said than done, however. Experiments are very, very hard. Running a research lab, I am constantly reminded of this, and I think it’s something that’s difficult for those doing purely theoretical or computational work to grasp. A thousand things can go wrong during each experiment; each datapoint is the result of lots of hard work, careful thought, and luck.

But it is nonetheless true that there is always an alternative to doing a small N study and drawing unjustifiable conclusions from it, and that’s not to do the study at all. There are plenty of questions that are unanswerable, or whose answers must await experiments that are capable of tackling them. Many decades elapsed between the hypothesis of gravitational waves and their detection, not because no one wanted to announce their discovery, but because it had to wait for the tools that made it possible to exist. It doesn’t help science to do a mediocre, inconclusive study in the interim.

This runs into the unfortunate reality of how a lot of science is done. We’re influenced by limitations of money and time — for example, needing to get a dissertation written or a grant renewal submitted this year rather than ten years from now, with a university lab of a few people rather than a CERN-sized 10,000. I often wonder if many of the current replication problems in science are due to a mismatch of scale. Important, interesting questions in psychology, for example, involve such complex, noisy systems (i.e. us) that one would expect to need Ns of tens of thousands, rather than a handful of volunteer subjects, but this is not what our structure is set up to study.

Another solution would be to retain the small N studies, but be much more restrained about claims drawn from them. One can imagine a world in which stating: “here are a few well-collected datapoints, perhaps eventually they’ll contribute to a clear story” would be valued, though it would perhaps be a world even more flooded data than ours is.

Discussion topic. Would your favorite question related to the gut microbiome (or whatever your field is) be better tackled by 10 research groups of ten people each, or one group of a hundred? One can make good case for either — try debating the question!

Exercise 5.1 Write your own simulation that reproduces my graph above. Or write something else that illustrates the N-dependence of false “effect sizes.”

Exercise 5.2 Describe additional problems with small N studies, not noted above.

Today’s illustration…

Instead of waves on a beach, I painted waves on a rocky shore, inspired by this. It turned out better than the sand and foam of the earlier ones in this series. Will I stick to rocks, or revisit sand for the next painting? Stay tuned!

— Raghuveer Parthasarathy, October 27, 2020