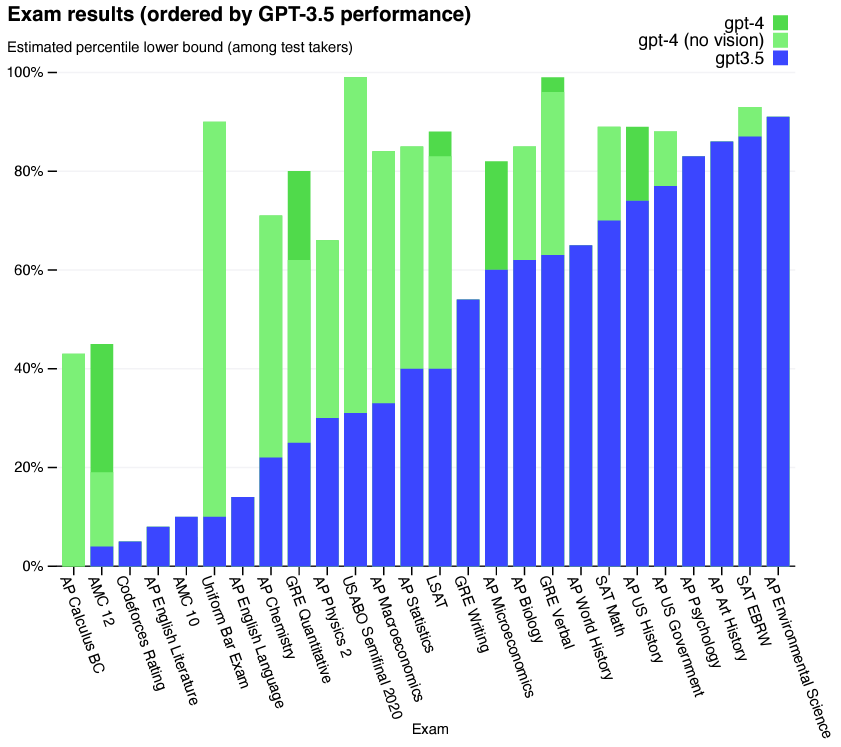

This post couldn’t have been written a year ago, and one year from now it may seem quaint and archaic. Still, I’ll write it to serve as a record. As noted in the last post, I taught The Physics of Energy and the Environment this past term, a course for non-science major undergraduates at the University of Oregon that I’ve taught several times before. The past several weeks have seen the public debut of large language model (LLM) chatbots, neural networks that are remarkably good at responding to human queries and generating sensible text. The most widely known is ChatGPT, with uses currently uses the GPT-3.5 and GPT-4.0 models created by OpenAI. Many people, including the OpenAI team itself, have had ChatGPT take various exams like the GRE, SAT, and various high school Advanced Placement exams. Overall it does well. GPT-3.5 scores above the 70th percentile on the AP US History Exam, for example, and over the 60th percentile in AP Biology. GPT-4.0 does even better. Here’s a graph, from OpenAI’s technical report:

I thought, therefore, that I’d see how ChatGPT would do on the final exam for my class. The exam consists of 31 multiple choice and 7 short answer questions, which I fed to the freely available GPT-3.5 service. Presumably, GPT-4.0 would do better.

In brief, ChatGPT-3.5 performed remarkably well, scoring in the 74th percentile. If it were a student in my class, its rank would be 22 out of 80 and if its grade were based solely on the exam, it would have (just barely) earned an A-. There are some subtleties to these scores that I’ll comment on below. I’ll give examples of questions ChatGPT answered correctly and incorrectly, and I’ll point out things I’ve learned about exam writing from this exercise. (For that, you can skip to ChatBots as tools to assess exam questions. in General Comments near the end.) Even knowing about how well ChatGPT has done on other exams, I was still stunned to see for myself how well it parses and responds to questions.

Examples of ChatGPT Answering Correctly

Here are a few examples of questions the chatbot answered correctly. The text below is exactly what I pasted into OpenAI:

Example #1

The past. How do we know about Earth’s past climates? Choose an answer:

A. Many civilizations around the globe have kept temperature records for the past few thousand years.

B. Tree rings, coral layers, and other proxy sources can be analyzed to determine the temperatures during their formation.

C. We run computational climate models backwards in time.

D. We assume that the Earth had been in equilibrium before human activity, and therefore that past temperatures were constant.

The response:

Score: Correct

Comments: It’s a very easy question; 93% of students answered correctly.

Example #2

LED bulbs use less power than incandescent bulbs because…

A. they operate at lower temperatures

B. they operate at higher temperatures

C. they have lower emissivities

D. they emit electromagnetic radiation mostly at visible wavelengths

The response:

Score: Correct

Comments: 69% of students answered correctly. This takes a little bit of thought — option A is true, but is not a reason why the statement is correct.

Example #3

Here’s an easy short answer question. Again, the prompt, verbatim:

Thermodynamic efficiency. Your friend at the South Pole discovers a pool of hydrocarbons that burn at 230 oC, or 500 K. (This isn't realistic, but that's ok.) The outside temperature is about -70 oC, or 200 K. What would be the maximum possible efficiency of a heat engine your friend could build?

The response:

Score: Correct (4/4 points)

Comments: As mentioned, this is an easy question given the content of the class; 65% of students got full points on it. Note that ChatGPT knows what temperature units to use (Kelvin), and extracts this from the text.

Example #4

Here’s a more conceptually challenging short answer question, and one that, as far as I know, doesn’t exist in a similar form elsewhere. Again, the prompt, verbatim:

A "thermal oscillator." Your friend proposes building a device in which energy oscillates back and forth between kinetic energy and thermal energy -- in other words, (i) the machine moves at some speed, (ii) the machine slows down, and its brakes generate thermal energy, making some substance hotter (iii) the substance cools and its thermal energy is used to make the machine accelerate back to its original speed, (iv) …and so on, repeating this cycle of (i) to (iii) forever.

Is it possible to create such a device? Explain why or why not. Assume there is no friction or air resistance present anywhere. Note that I am not asking how you would make this device; I am asking whether or not it is possible.

The response:

Score: Mostly correct (4/5 points)

Comments: The second paragraph of OpenAI’s response is not relevant, and the third implies (incorrectly) that entropy decreases. The rest is excellent, though, and if a student wrote this I’d score it as 4 or possibly the full 5 pts. I’ll be conservative and give +4 pts.

Examples of ChatGPT Answering Incorrectly

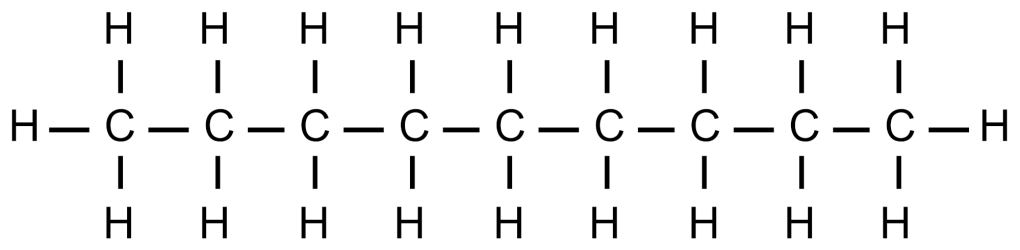

As noted, ChatGPT got most questions right, but not all of them. These included some easy questions involving molecular structures, for example a series beginning:

Consider the following molecule:

GPT-4 can read images as input, but GPT-3.5, which I was using, cannot (even as text URLs to images). I therefore had to rephrase the prompts as, for example:

Example #5

Consider a molecule with 9 carbon atoms and 20 hydrogen atoms. This molecule is a component of which fossil fuel? Choose the correct option.

A. natural gas

B. coal

C. liquid petroleum (oil)

D. All of these

The response:

Score: Incorrect

Comments: OpenAI doesn’t reach a sensible conclusion about the implied chemical structure. Interestingly, it gets the next question, “Burning one of these molecules will produce how many molecules of CO2?” correct. It wold be interesting to see how GPT-4 does on these molecular structure questions.

Example 6

Choose the option (A, B, C, D) that best completes this sentence: The formation of nitrogen oxides …

A. … is a necessary consequence of the combustion of a hydrocarbon

B. … is primarily due to insufficient oxygen during combustion

C. … is a consequence of unwanted chemical reactions involving atmospheric gases

D. … is a consequence of impurities present in fossil fuels

E. … is beneficial to human health, since nitrogen is inert.

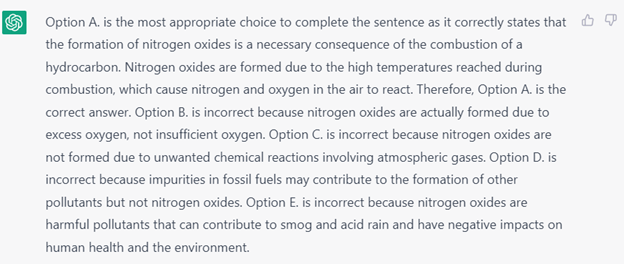

The response:

Score: Incorrect

Comments: C is the correct answer. “A” is sensible — I’d listen to an argument from student that it depends on the meaning of “necessary.” Combustion doesn’t involve nitrogen, but one might argue that if nitrogen is present, it will necessarily react at the temperatures involved. C is a better answer, though!

General comments

Despite seeing many reports of how impressive ChatGPT is, I was still impressed. Before this exercise (which I did a few weeks ago) I hadn’t played with OpenAI at all, so this was my first first-hand exposure. Its ability to handle plain-English sentences and to put together answers that are clearly more than “looking up matching text” — see for example the “thermal oscillator” question above — are remarkable. I’ve worked with neural networks, even doing self-imposed neural network programming homework assignments, yet I am one of the many who is surprised that training a network on text prediction leads to the emergence of deeper connections between concepts.

Assessing my exam. This exercise of feeding my exam to ChatGPT made me think more about the sorts of questions I’ve written, too many of which are shallow — the lower levels of Bloom’s taxonomy. I knew this already — I feel like I’ve been pushed to make my course less challenging over the years, though I get the impression that it’s nonetheless more challenging than most gen-ed courses. (See also my last post.) Still, looking at questions for which it’s not impressive that ChatGPT answers correctly, where the answer is fairly obvious based on facts that should be easily internalized, highlights that it would be great to ask more questions that call for deeper thinking and analysis.

ChatBots as tools to assess exam questions. In two cases, ChatGPT’s disagreement with my own intended answer revealed questions that were ambiguous, for example the nitrogen oxides question noted above. Another example:

As noted in class, some atmospheric carbon dioxide dissolves in the oceans. Warmer water can hold less dissolved carbon dioxide than colder water. Does this form a positive feedback mechanism, negative, or neither?

I’ll leave it as an exercise for the reader to ponder what’s wrong with this question, and why there are two valid answers!

The diagnosis of question quality is a useful, and unexpected, application of ChatGPT. I should remember in the future to use this, feeding the network questions and seeing if its answers reveal ambiguities or alternative interpretations.

What does it all mean? I will not comment on whether what ChatGPT does is “thinking.” I find a lot of commentary on this that I’ve read to be mediocre, often based on unstated assumptions that human thought is somehow not based on physical processes), but rather on some sort of magic. I do find it interesting, though, that also underlying a lot of dismissals of LLMs is what seems like a significant over-estimation of typical human performance. Whether it’s due to ability or motivation, the average person doesn’t think at the level that the average writer of comments on AI seems to believe. Most academics and technical people, I claim, have a poor understanding of how atypical the people with whom they interact are. It’s easy to live in a bubble — probably easier than it’s ever been. This isn’t a criticism of normalcy; it is the average person who is most harmed by the warped perceptions and expectations of “elites.”

Ideally, the existence of LLMs should be wonderful for teaching and learning. Students could ask questions of responsive “textbooks.” Instructors could focus on providing broader contexts to topics and spending more time on individual-level assessments, like oral exams. This requires, however, that the purpose of education be learning and assessment, rather than credentialism and revenue generation. We’ll see what happens. In the meantime, I should check out GPT-4.

Today’s illustration

A cheetah, based on this photo. Done quickly; not as nice as the leopard from the last post, but I enjoyed making it.

— Raghuveer Parthasarathy. April 12, 2023

Once this thing realizes it can BS us, it will want to run for office.

Fabulous experiment! If I have time, I’ll try the same thing with a selection of my final exam questions. I like the idea of using it to better calibrate our question banks.

A useful application for me (as a person who writes their own questions) would be if a tool like this could actually parse the Canvas question bank format, identify “versionable” questions, and generate plausible versions which I then review and either reject or accept into the bank. I have some GEs helping me with this currently, but I’d rather them be able to do more new question drafting and less versioning. In general question bank management within Canvas is tedious and onerous and I’m not aware of any cross-platform external tools that are of any use. Can LLMs come to the rescue?

I’m intrigued by the interactive textbook idea, too. In addition to engaging in Q&A , what if it could also generate practice problems on demand, so that a student could practice with concepts as they read (or after they fail a quiz on that topic)?

First, however, these tools have to stop making things up.

Good points! About avoiding hallucinations and having LLMs generate factually correct responses and / or questions based on textbooks to help students learn: I think this will be done soon. Steve Hsu has a company tackling exactly this*, and I’d bet others are also working on it. (* https://www.manifold1.com/episodes/chatgpt-llms-and-ai , I think around 3/4 of the way through.)